Wednesday, February 11, 2026

AI Is Teaching Me How to Be More Human

The machines handle the data. You handle the meaning.

Most individual contributors in corporate environments have spent their careers as someone else's data input.

Collect information. Analyze it. Package it. Hand it up the chain so someone else can make a decision. The value of the IC was being a node in the justification machine — the person who built the spreadsheet, ran the numbers, assembled the deck. The data they produced gave leaders cover to act.

Now those same people are managing digital workers. AI agents that handle research, code, content, operations — entire teams that need direction. And for many of them, it's their first real experience of management. The natural instinct is to manage the way they were managed: demand data, require justification, wait for certainty before acting.

But that instinct is worth questioning. Because the system that created it was never designed to produce the best decisions. It was designed to produce the safest ones.

The Data Worship Problem

Corporate culture trained a generation to worship data. Not because data is bad — it's essential. But because data provides something professionals crave: cover.

If you have data supporting your decision, you can't be blamed for making it. The data said so. You did the analysis. You showed your work. Even if the decision was wrong, the process was defensible. Data became less about finding truth and more about manufacturing justification.

AI does this flawlessly. Give it a dataset and a question, it finds the answer faster than any analyst. Give it conflicting data and a preferred conclusion, it builds the case. It's the ultimate justification machine — which is exactly what many of us were trained to be.

And the flip side is worse: without data, we do nothing. How many good ideas died because there wasn't a study to support them? How many opportunities passed because nobody could build the business case yet? Data worship doesn't just manufacture justification — it manufactures paralysis.

But AI can't ask what data is missing.

The Questions Data Can't Answer

There's a category of thinking that no amount of data addresses.

What's not being measured? The most important dynamics in a business often aren't in any dashboard. Team morale. Customer trust that hasn't been tested yet. The slow erosion of quality that won't show up in metrics until it's a crisis.

How could this tell a different story? We live in a world with so much data that you can construct a credible narrative for almost any position. The same numbers that prove a strategy is working can, with different framing, prove it's failing. Knowing which interpretation to trust requires something beyond the data itself.

What happens when two datasets conflict? Revenue is up but customer satisfaction is down. Velocity is high but code quality is declining. Hiring is strong but retention is weak. The data points in both directions simultaneously. The human skill is reading between the numbers to find what's actually happening — and having the conviction to act on that reading.

AI is exceptional at processing what's in front of it. It doesn't notice what isn't there. That gap — the space between available data and actual understanding — is where human judgment lives.

The Robots Were Already Here

AI is also exposing a type of professional who was already operating like a machine.

People who valued conformity over creativity. Who prized interchangeability — the ability to slot into any role and produce predictable, unremarkable output. Who wanted results that were robotic in nature: consistent, defensible, devoid of originality.

For decades, this was rewarded. The "safe pair of hands." The person who never made a bold call but never made a bad one either. The manager who ran everything through a framework and always had data to support the recommendation.

AI does all of that now. Faster. Cheaper. Around the clock.

The people who built careers on being human robots are discovering that actual robots have arrived — and they're better at the job. If your professional value was "I process information reliably and produce defensible outputs," that's what a model does by definition.

The Definition Was Always Incomplete

So many of us aspired to this professionally: know everything relevant. Account for every variable. Make no mistakes. Eliminate uncertainty. Produce the optimal answer.

AI already does this when it has access to all the information. That's literally what inference models are built to do — process complete inputs and produce the most likely correct output. If the definition of peak performance was "process all available information correctly," then we've already built the thing we were trying to become.

The fact that it doesn't feel like enough tells us something: that definition of excellence was always incomplete. It described the floor of good work, not the ceiling. The mechanical part. The part that was always going to be automated — we just didn't realize it because it was hard enough to feel important.

The best work was never about processing all the information correctly. It was about what you do when the information is incomplete, contradictory, or missing entirely.

The Cost of Being Wrong Just Changed

The entire apparatus of data worship — the org structures, the approval chains, the committee reviews, the months-long planning cycles — exists because the cost of making a change was historically enormous. Ship the wrong feature, and you've burned six months of engineering time. Launch the wrong strategy, and you've wasted a quarter's budget. The penalty for being wrong was so high that organizations designed themselves around de-risking every decision.

That calculus is breaking down.

With AI — particularly in software, but increasingly in other areas — the cost of iteration has collapsed. You don't have to be precise on the first attempt. You can implement multiple possibilities in parallel and let reality tell you which one works. You can test an idea in days that used to take months to spec, approve, and build.

When iteration is cheap, you don't need the data to be perfect before you start. You can start, gather data on what actually happens, and adjust. The data comes after the decision, not before it.

This is the real disconnect in the "are we waiting for AGI?" conversation. One camp is waiting for AI to be good enough to do things the way they've always been done — slot into existing processes, replace existing roles, execute existing playbooks. That camp will be waiting a long time, because the old playbooks were designed around constraints that no longer exist.

The other camp is asking a different question: what if we free ourselves to work in a way that's actually more human? More creative. More taste-driven. More willing to try things without a committee's blessing. Make decisions based on experience and intuition, ship fast, and gather data on the results instead of gathering data to justify the attempt.

That's a world where people want to do more work, not less. Because the work becomes genuinely interesting when it's driven by conviction instead of cover.

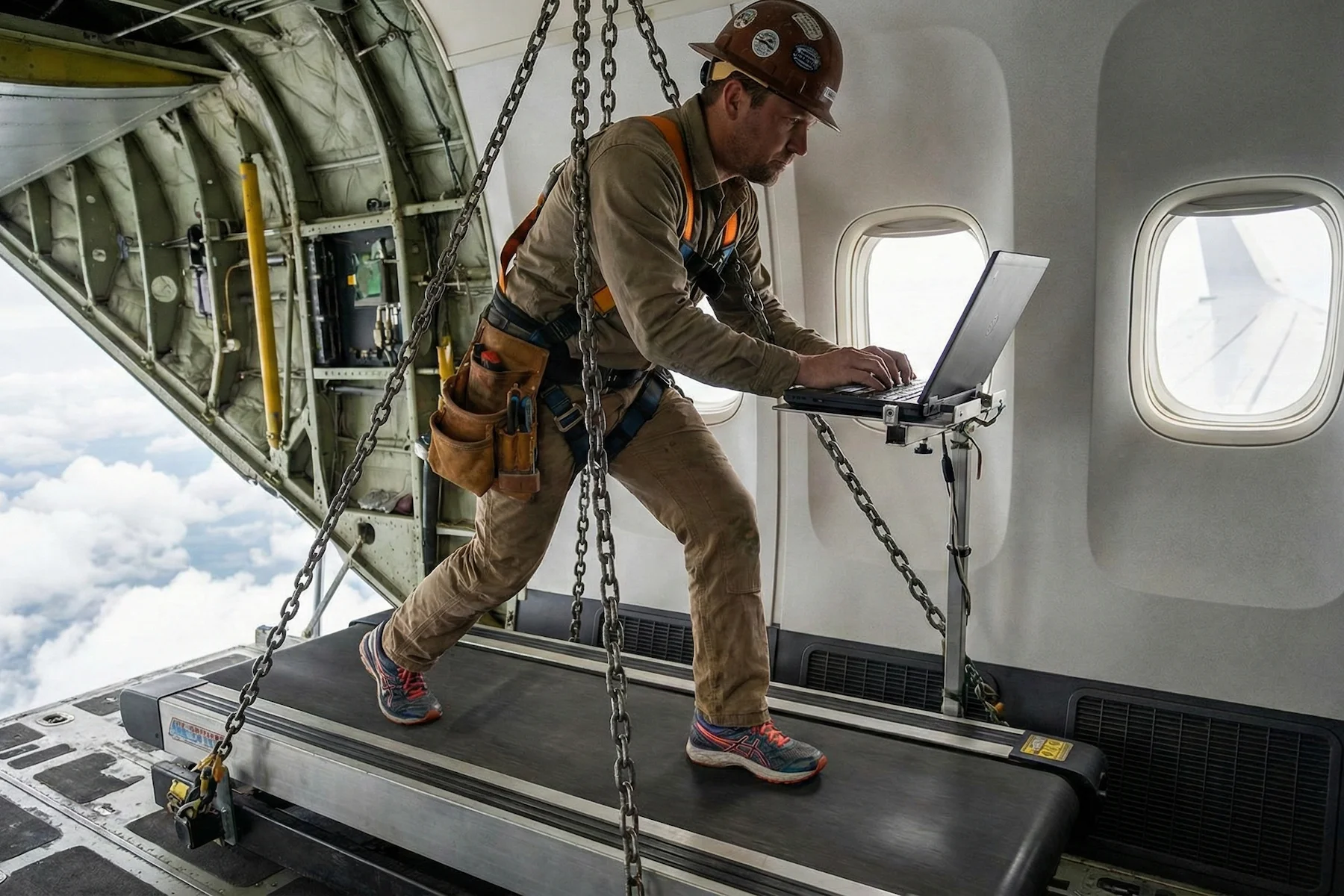

Operating Without the Full Picture

The most effective leaders I've worked with share a trait that doesn't show up on any resume: they're comfortable making decisions without complete information.

They make calls before all the data is in. They trust their experience when the spreadsheet says something different. They sense when a strategy is wrong before the metrics confirm it. They have taste, humility, and integrity — an instinct for what's right that they can't always articulate but that proves correct more often than not.

It's accumulated pattern recognition from years of being in the work — the kind of domain understanding that doesn't come from datasets. It comes from watching what happens when the data is wrong.

Making a decision at 60% certainty. Reading a room no dashboard captures. Knowing which of two conflicting signals to trust because you've seen the pattern play out before. Understanding that the numbers are technically correct but strategically misleading.

These are leadership skills. They've always been leadership skills. What's changed is that everything around them — the data gathering, the analysis, the synthesis, the presentation — has been automated. The mechanical work that used to surround human judgment is gone. What's left is the judgment itself, standing alone.

Either you have it or you're developing it. There's no busy work left to hide behind.

The Reframe

AI hasn't diminished what makes us human. It's clarified it.

For years, the distinctly human skills — intuition, taste, the ability to hold contradictions and still choose a direction — were buried under mechanical work that felt important because it was hard. Collecting data. Formatting reports. Building decks to justify decisions already made. That labor created the illusion that the data was the contribution.

Now the mechanical work is handled. What remains is the thinking that only humans do: setting direction without a map. Making judgment calls without complete evidence. Creating something that didn't exist in any dataset.

AI is teaching me this every day. Not by replacing my judgment, but by handling everything around it — and making it impossible to pretend the mechanical work was ever the point.

The most human skill you could be developing right now is learning to lead when you can't prove you're right. To trust experience over spreadsheets. To have taste in a world drowning in data. To make the call that no model would make, because no model has your context, your values, or your skin in the game.

The data was never the point. What you do when the data isn't enough — that's where leadership lives.

Book & App — Launching September 2026

Without Expectation

Debugging Life's Complex Systems

The same systematic approach engineers use to debug complex systems — applied to the complex system of your life. Learn to observe without judgment, distinguish symptoms from root causes, and run small experiments that compound into massive change.

- 23 chapters

- AI prompt templates

- iOS companion app

- Print, digital & audio

If you liked this, you might also like...

Aspire, Judge, Create: The Three Skills AI Can't Replace

Models can infer, synthesize, and execute. But they can't set aspirations, exercise judgment, or think orthogonally. These are the skills to build a career on.

The Full-Stack CEO

You're not a specialist anymore. You're a CEO with digital workers in every department. The question is whether you're ready to lead them.