Tuesday, February 3, 2026

Cost Per Outcome

The only AI metric that actually matters.

Here's the only question I ask now when evaluating AI for a task: What's the cost per outcome?

Not "is it smart enough?" Not "can it do this perfectly?" Just: can it produce this outcome cheaper than I can — including the time I'll spend checking its work?

When the answer is yes, I use AI. When it's no, I don't. The capability debates, the AGI speculation, whether it's "really" intelligent — none of that matters for practical decision-making.

What I've noticed is that most people are waiting for AI to do work the way it's always been done. Fit into existing processes. Slot into current workflows. And if that's the requirement, AI falls short constantly.

But if you're willing to change how work gets done — to redesign workflows around what AI is actually good at — the tools we have today go much further than most people let them.

Our resistance to change is the real limiting factor, not AI capability.

When AI can produce an outcome cheaper than a human — including the cost of a human checking the work — that job changes forever. Not because AI became "generally intelligent," but because the economics crossed a threshold.

The Only Metric That Matters

Stop thinking about AI capability. Start thinking about cost per outcome.

An outcome is a completed unit of work: a drafted email, a debugged function, a summarized document, a designed component, a analyzed dataset. Something that was not done, and now is done.

The cost of that outcome has two possible paths:

Human produces it:

- Time spent × hourly rate

- Iterations happen invisibly (drafts, revisions, false starts)

- Final product delivered

AI produces it:

- Token cost × attempts to get it right

- Human review time × hourly rate

- Final product delivered (after approval)

When Path B costs less than Path A for equivalent quality, the economics have spoken. That work belongs to AI now.

The Formula

Here's how to think about it:

Human Cost = Hours × HourlyRate

Simple. A senior developer at $150/hour spends 4 hours on a feature, that's $600.

AI Cost = (PrepHours × HourlyRate)

+ (Tokens × TokenCost × Attempts)

+ (ReviewHours × HourlyRate)

More complex. You spend 15 minutes designing the work package ($37.50). The AI costs $0.50 in tokens but needs 5 attempts ($2.50). Then you spend 30 minutes reviewing and correcting ($75). Total: $115.

In this example, AI still wins by 5x.

But change the variables and it flips. Complex work requires more prep time to specify correctly. It requires more attempts to get right. And it requires more review time to verify. At some point, you're spending as much time preparing and reviewing as it would have taken to do the work yourself.

This is the offshore dev problem. Anyone who's managed offshore development teams knows this feeling: you spend so much time writing detailed specs and reviewing output that you wonder if you should have just done it yourself. AI has the same dynamic. The prep tax and review tax scale with complexity.

The question becomes: at what complexity level does the math stop working?

The Hidden Cost of Verification

Here's what makes AI economics tricky: you have to check the work.

A human employee produces output you can generally trust — not because humans don't make mistakes, but because you've calibrated your trust over time. You know their strengths, their weaknesses, when to look closer.

AI doesn't have that trust built up. And worse, it fails in ways that look correct. Confident, well-formatted, completely wrong.

So you review everything. And that review time is the hidden tax that determines whether AI actually saves money.

The people getting massive productivity gains from AI aren't the ones blindly accepting output. They're the ones who've gotten fast at verification — who can scan AI work and spot problems quickly. Their review tax is low.

This is why I use OpenClaw despite the risks — I've built up enough verification skill that my review tax is manageable. Someone without that skill would spend more time checking the work than it would have taken to do it themselves.

The Recursive Optimization

Here's where it gets interesting: what if you use AI to reduce the prep tax and review tax?

AI can help you write better specs. AI can help you review AI output. Each layer of automation compresses the human time in the formula.

And then there's the parallel model: instead of iterating linearly (attempt → review → fix → review), you run the whole process multiple times in parallel and select the best outcome.

We're already doing this with image generation. Nobody generates one image and iterates on it. You generate ten and pick the best one. The "review" becomes selection, not correction. That's a fundamentally different — and faster — workflow.

LLM providers are testing this with text: multiple response options for you to choose from. The model isn't confident which is best, so it gives you options. Your job shifts from "fix this" to "pick the best."

This is coming to complex work soon. Why review one AI-written draft when you can skim five and pick the strongest? Why debug one implementation when you can compare three approaches?

The cost per outcome drops dramatically when you can parallelize generation and reduce review to selection.

What Jobs Have Crossed the Threshold?

Let's be concrete. Where has AI cost per outcome already dropped below human cost?

Clearly crossed:

- First-draft writing (emails, documentation, marketing copy)

- Code scaffolding and boilerplate

- Data transformation and formatting

- Research synthesis (gathering and summarizing information)

- Translation (for most use cases)

- Simple image generation

For these tasks, even with review time factored in, AI is cheaper for most people in most situations.

Crossing now:

- Complex code implementation (with experienced reviewers)

- Visual design iteration

- Customer service responses

- Legal document review

- Financial analysis and reporting

These depend heavily on the reviewer's skill. An experienced developer reviews AI code in minutes. A junior developer might spend hours and still miss problems. The threshold is different for different people.

Not yet crossed:

- Novel research and invention

- High-stakes decision making

- Complex negotiation

- Physical skilled labor

- Anything requiring real-world manipulation

The review tax here is too high, the error cost too severe, or the capability gap too wide.

The Threshold Keeps Moving

Here's why this framing matters: you can predict what's next.

A job crosses the threshold when:

- AI capability improves (fewer attempts needed)

- Token costs drop (cheaper per attempt)

- Review tools improve (lower review tax)

- Trust calibrates (you learn when to check closely vs. skim)

All four are happening constantly. Jobs that haven't crossed the threshold today will cross it next year. The economic pressure is relentless.

You can model your own work: What outcomes do you produce? What's your current cost per outcome? Where would AI need to be for the economics to flip?

Then watch those variables. When they cross, adapt.

The New Job Description

Here's what happens when AI cost drops below human cost for an outcome: the human doesn't disappear. The human role changes.

You stop producing outcomes. You start:

- Designing work packages: Breaking complex goals into outcomes AI can produce

- Reviewing outputs: Checking that outcomes meet requirements

- Handling exceptions: Dealing with the cases AI can't

This is management. Not in the org-chart sense, but in the operational sense. You're directing work and ensuring quality rather than doing the work yourself. This is how the 100x solo operator actually operates — not by working 100x harder, but by managing AI that produces outcomes at a fraction of the cost.

The people who complain that AI "doesn't do exactly what I want" are often failing at work package design. They're giving vague direction and expecting perfect output — something that doesn't work with human employees either. Stop re-explaining yourself — build systems that give AI the context it needs upfront, and your attempts-per-outcome drops dramatically.

The people who complain that AI "makes too many mistakes" are often failing at efficient review. They haven't built systems to verify output quickly, so their review tax eats their savings.

The skills that matter now are management skills: clear delegation, efficient verification, appropriate trust calibration. These are the human skills that matter in an AI-augmented world.

What This Means For You

The capability is already here. The question is whether you're willing to change how you work.

Ask yourself:

- What outcomes do I produce in my job?

- What's my current cost per outcome?

- Which of those outcomes could AI produce cheaper — if I redesigned the workflow?

- What's stopping me from doing that?

If the answer to #4 is "the way we've always done it" — that's the bottleneck. Not AI capability. Not AGI. Your process.

The uncomfortable truth: your job isn't to produce outcomes anymore. Your job is to produce outcomes at the lowest cost per outcome. Sometimes that means doing the work. Increasingly, it means managing AI that does the work — but only if you're willing to change how the work gets done.

The people waiting for AI to fit their existing workflows will be waiting a long time. The people redesigning their workflows around AI's strengths are already there.

Which one are you?

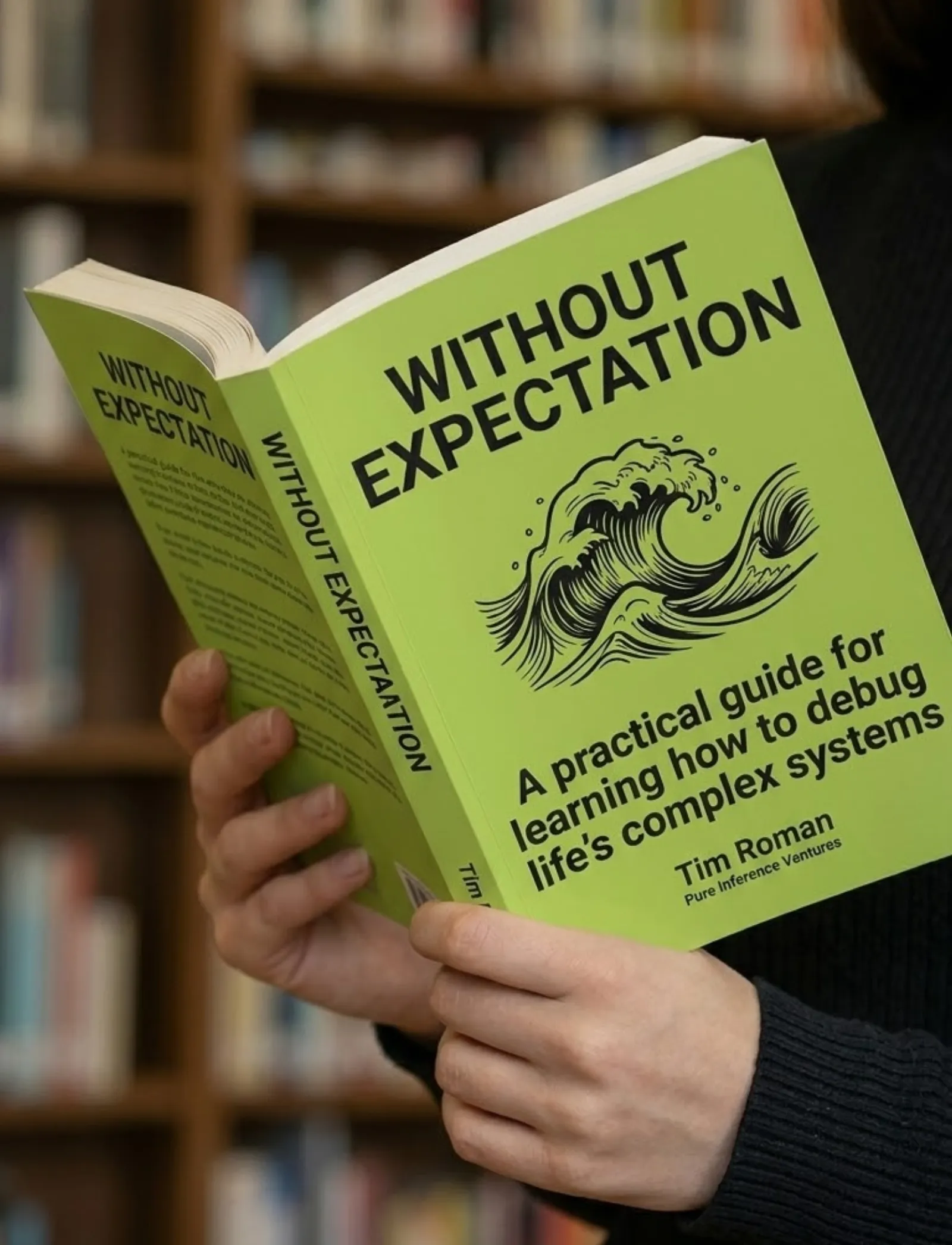

Book & App — Launching September 2026

Without Expectation

Debugging Life's Complex Systems

The same systematic approach engineers use to debug complex systems — applied to the complex system of your life. Learn to observe without judgment, distinguish symptoms from root causes, and run small experiments that compound into massive change.

- 23 chapters

- AI prompt templates

- iOS companion app

- Print, digital & audio

If you liked this, you might also like...

The Compounding Loop

We're optimizing our workflows while AI optimizes its own. The gains compound faster than anyone expected. Here's how I'm actually using this — and why the same pattern works beyond software.

The 100x Solo Operator

The 100x developer is a meme. The 100x solo operator is a business model. Here's what it actually looks like to run a company with AI as every department.